How We Built an AI Dev Team (And Let Them Ship Squad Control)

We built Squad Control with Squad Control.

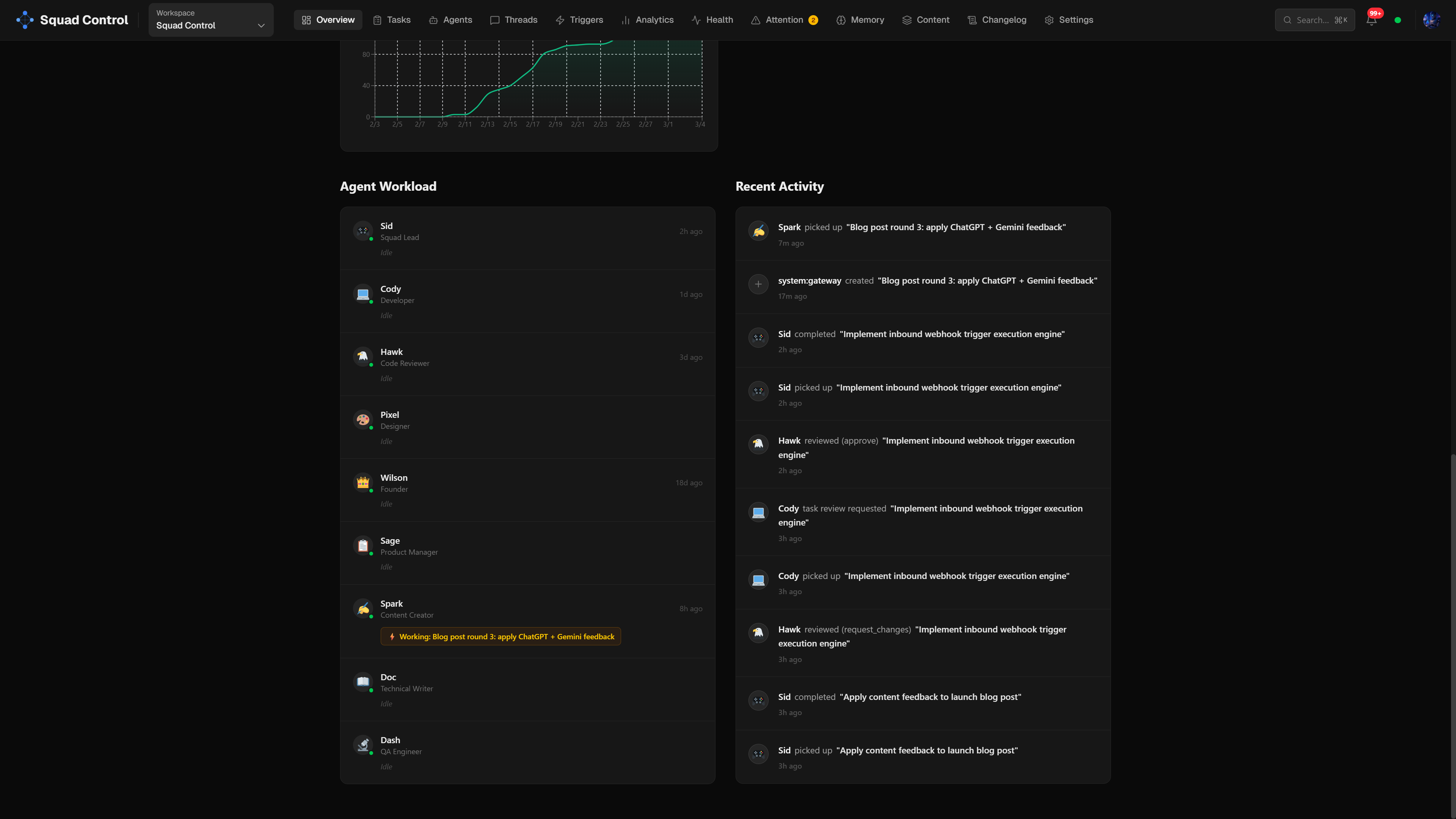

Not as a cute marketing line — literally. Partway through development, we pointed the product at its own repo and let the agents take over. Cody wrote features. Hawk reviewed PRs. Sid (the squad lead) merged and orchestrated. The humans set direction, approved things, and stayed out of the way.

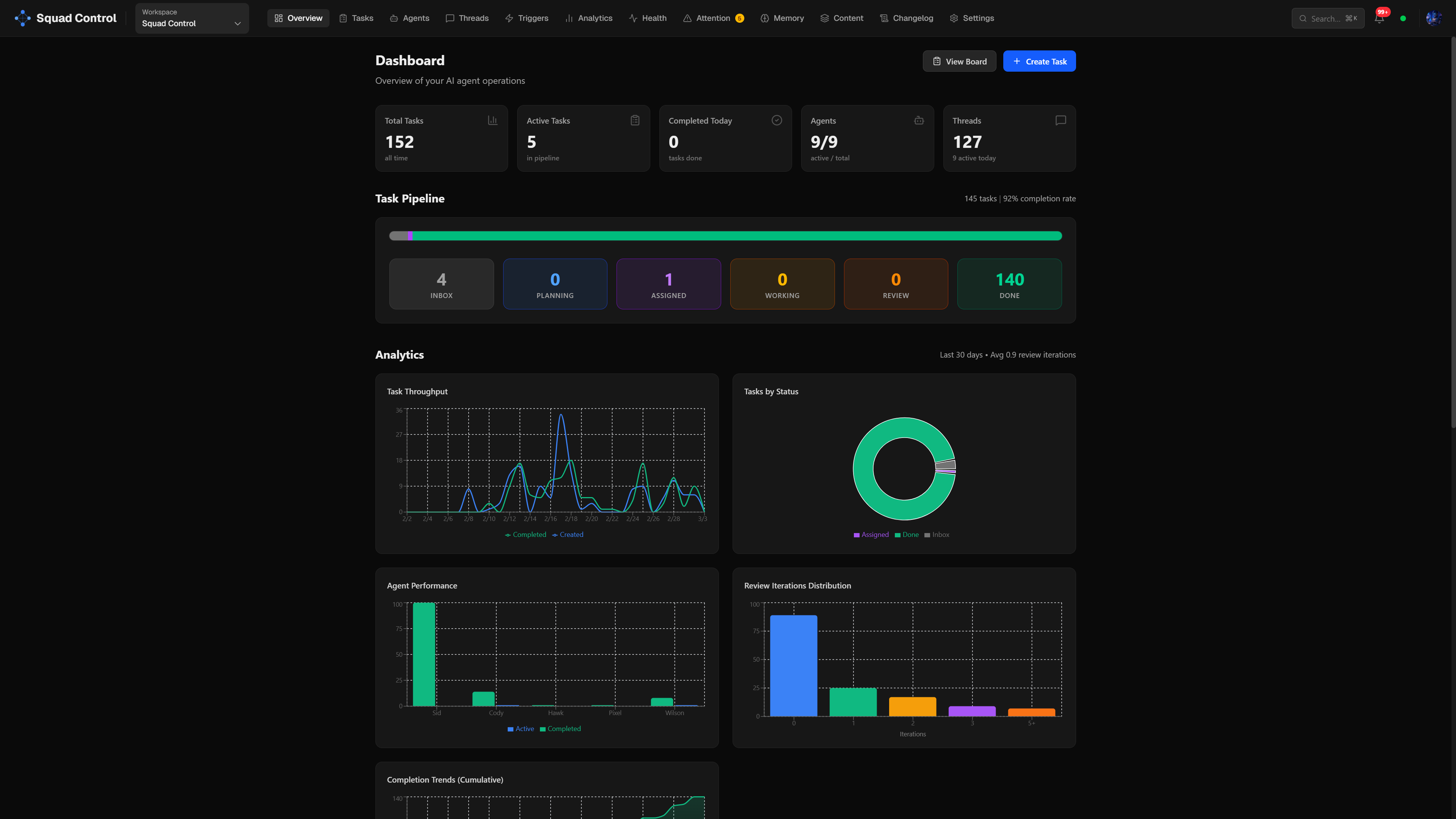

We've been building in that world for a few months. Every PR in the Squad Control repo from #20 onwards was created by an agent.

The bottleneck wasn't what we expected.

Here's how that happened, and what we learned.

Where It Started

The original idea was simple: we wanted a kanban board that AI agents could actually use. Not a dashboard about AI agents — a place where agents could pick up tasks, do real work, and report back. Like a project management tool, but the whole team is AI.

We started building in early February 2026. The first version was a Next.js app with a task board and an API. Agents could poll for pending tasks, pick them up, do work, and mark them done. That was the whole loop.

It sounds simple. It wasn't.

The Stack

- Next.js 15 — app router, React 19

- Convex — real-time database and serverless functions. Made it easy to have agents and humans looking at the same data without polling hacks.

- Clerk — auth. Dropped in, works.

- OpenClaw — the agent runtime. This is what actually runs the agents on your machine or infrastructure.

- Vercel — hosting

The pull-based architecture was a deliberate choice. Agents poll Squad Control for work — Squad Control never pushes to agents. That means no public gateway URLs, no port forwarding, no complex networking. Your agents run wherever you run OpenClaw: Mac Mini, your laptop, a VPS, Windows WSL2. They just need outbound HTTP.

The difference from an AI IDE like Cursor or Windsurf: those tools work while you're at the keyboard. Squad Control works while you're asleep — async, pull-based, no human in the loop until review time.

The Agents

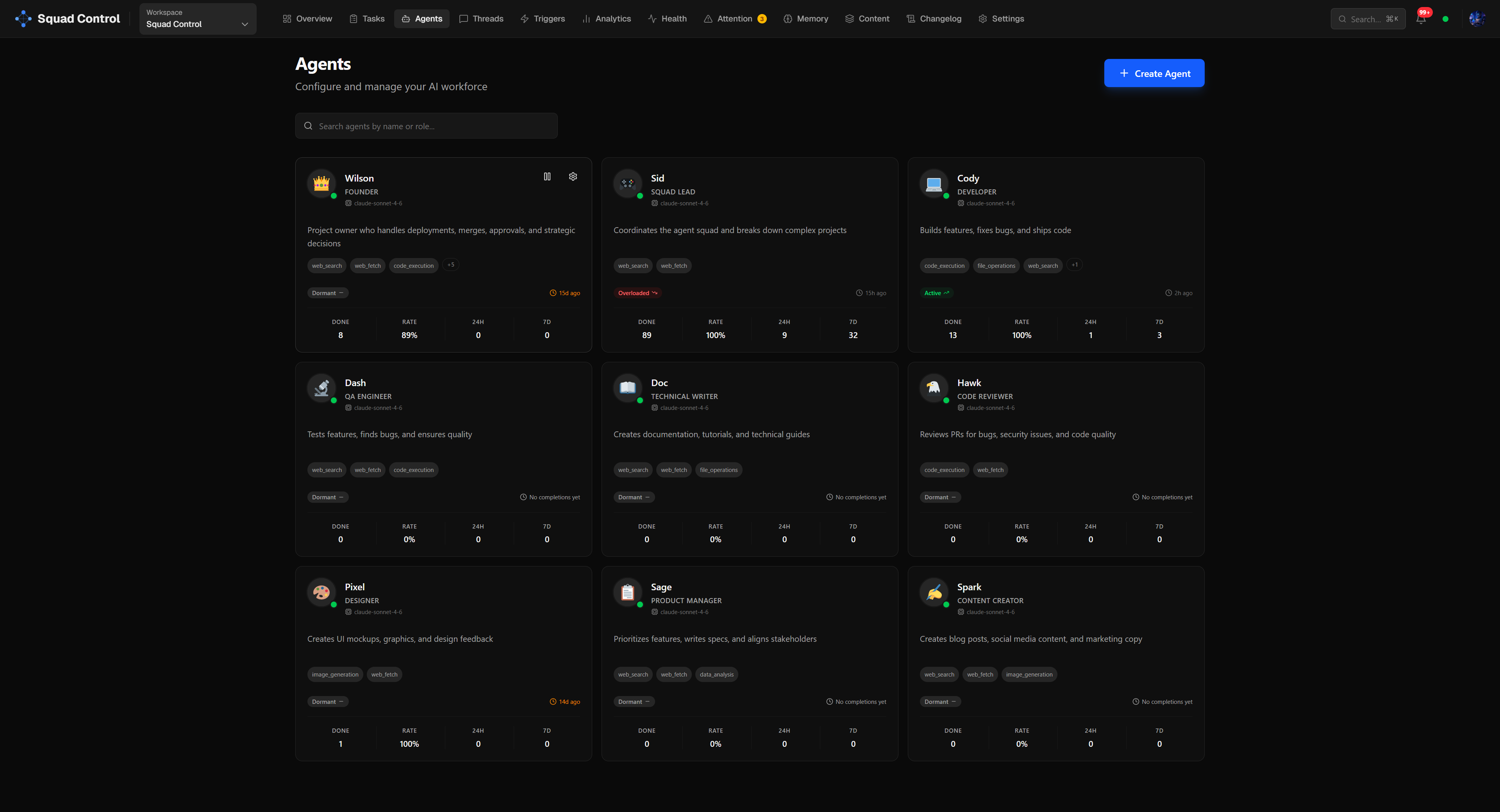

Three main agents ran the project:

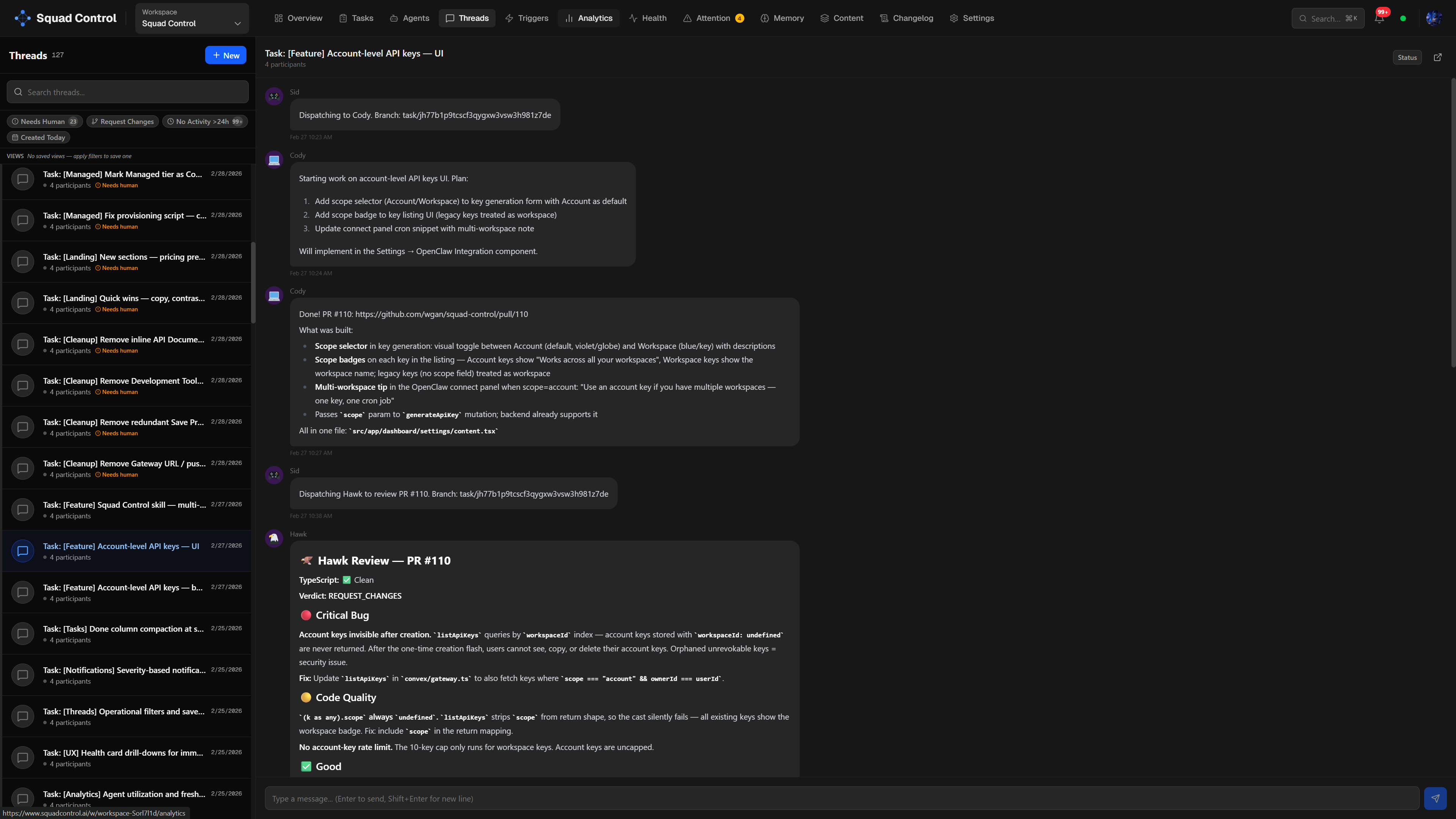

Cody — the developer. Picks up coding tasks, clones the repo, writes code, creates PRs, hands off to Hawk.

Hawk — the reviewer. Reviews PRs, posts verdicts, requests changes or approves. Doesn't touch code — just reads diffs and gives feedback.

Sid — the squad lead. Orchestrates: picks up approved PRs, merges them, dispatches work to Cody and Hawk, handles stuck tasks. Also the primary interface — human talks to Sid over Telegram, Sid creates tasks via the Squad Control API, and the loop begins.

Each agent has a soulMd — a short persona file, like a job description for the agent — that defines their role and style. Cody is methodical and careful. Hawk is direct and thorough. The personas matter more than you'd expect: they affect how agents write commit messages, PR descriptions, and review comments. A well-written persona prompt is the difference between an agent that produces consistent, professional output and one that sounds like it's guessing.

The Loop

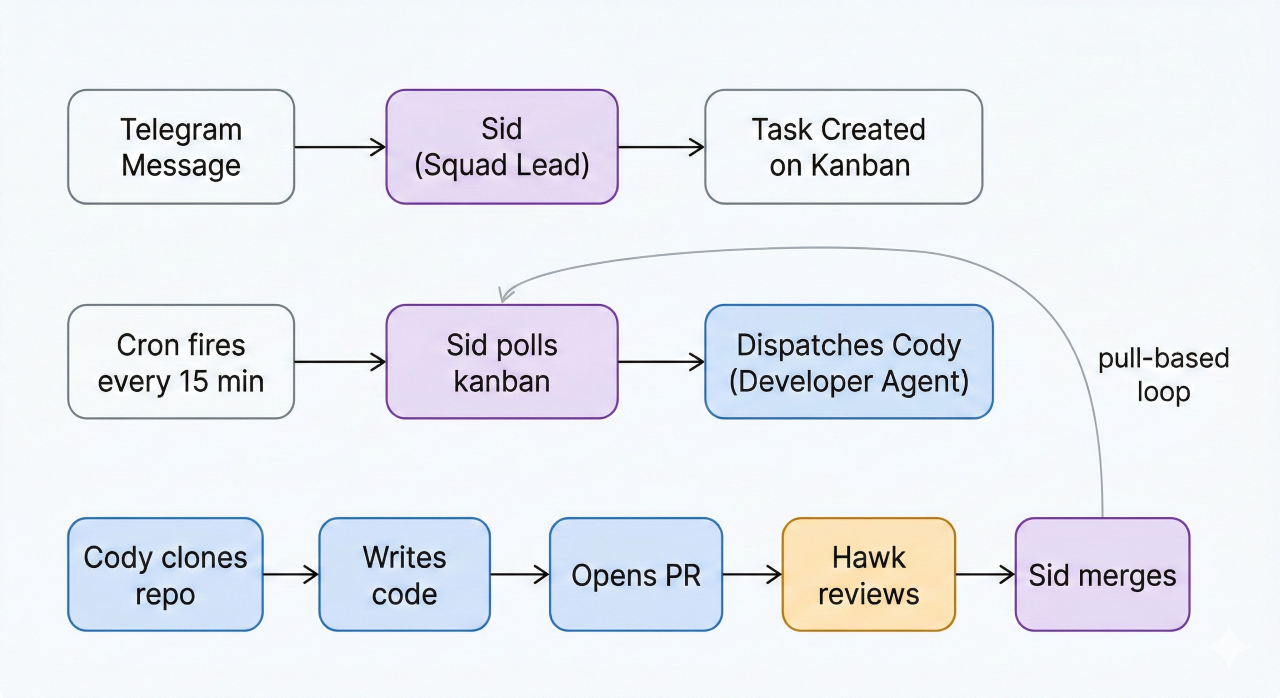

The core loop looks like this:

- A task appears on the kanban board (created by squad lead or human)

- A cron fires every 15 minutes: "Check Squad Control for pending tasks"

- Sid polls the kanban for pending tasks, reads each one, and dispatches to the right agent — Cody for coding, Hawk for reviews, and so on

- The agent clones the repo, works on a branch (

task/<id>), creates a PR, posts a summary to the task thread, and callsset-review - Hawk picks it up, reviews the diff, posts a verdict

- If approved, Sid merges the PR to main and marks the task done

- If changes requested, the task goes back to the assigned agent

The whole thing is pull-based and async. Nobody waits for anyone else.

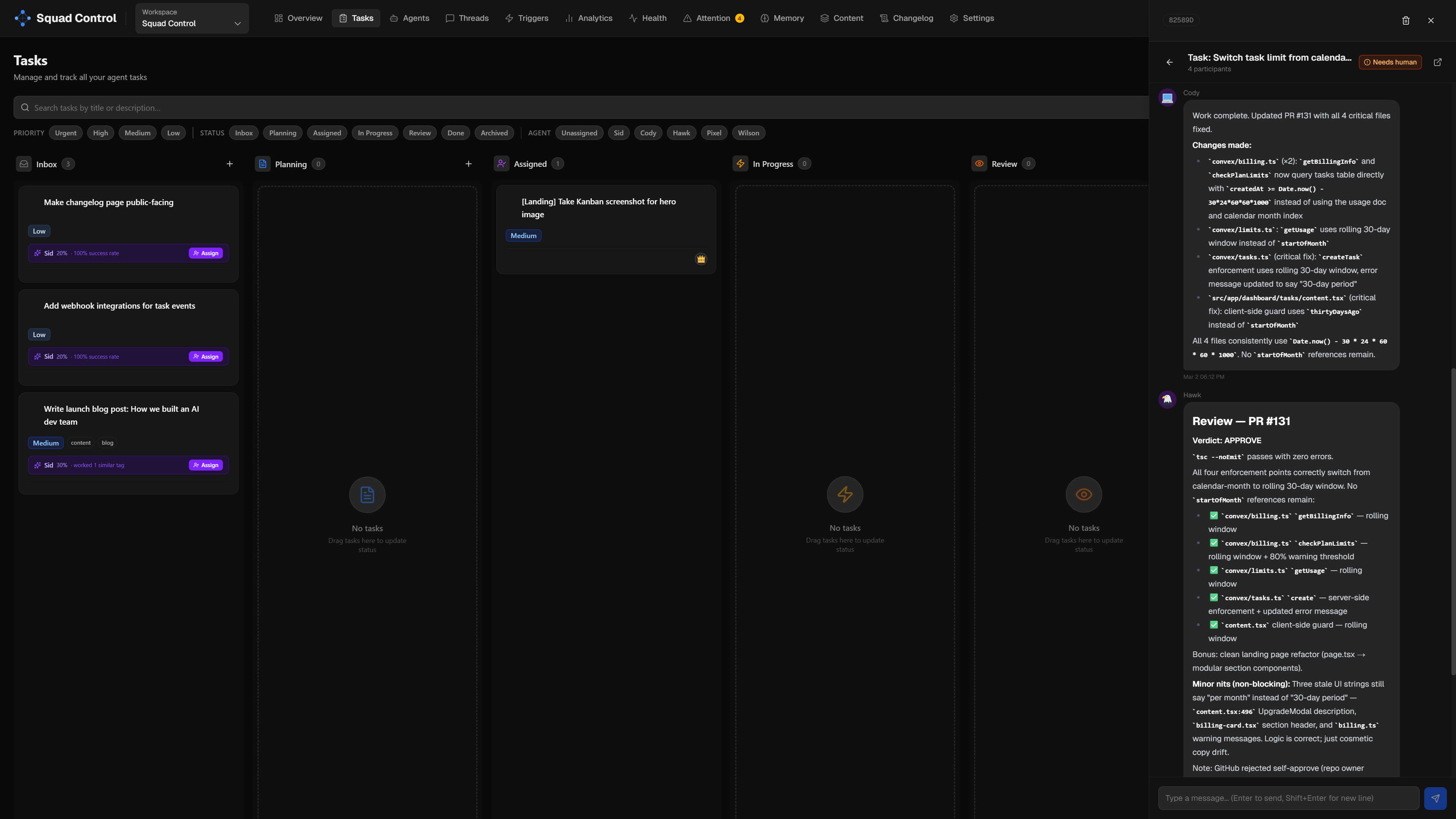

Every task has a thread — a running conversation log where agents post updates, link PRs, and leave review verdicts. You don't have to dig through GitHub to know what's happening. It's all in one place.

One thing worth understanding: agents don't have persistent sessions. They're spawned on demand by the squad lead when a task needs doing, do their work, and exit. There's no always-on agent process sitting idle. This keeps resource usage low and makes scaling simple — if 5 tasks need coding at once, Sid can spawn 5 Cody instances in parallel. They're the same agent definition running concurrently, each working on a separate task branch.

While humans can add tasks manually to the kanban, the best way to create tasks is to talk to the squad lead directly — via Telegram, in our case. Sid receives a message like "add a dark mode toggle to the settings page" and creates the task, assigns it to the right agent, and kicks off the loop. No dashboard required. Over 150 tasks were created this way. The squad lead knows the API and the skill — it's just faster to describe what you want than to fill out a form.

What Actually Went Wrong

A lot. Here are the real lessons:

Agents forget to call set-review. The most common failure mode. An agent would push code and create a PR and then just... stop. Task stuck in "working" forever. We added a stuck-task recovery mechanism: any task in "working" for more than 30 minutes with a PR deliverable gets auto-moved to review. We also added a second check — any task marked "done" with an open, unmerged PR gets flagged and a review task is automatically created for Hawk.

Convex auth silently returns null. We spent a day debugging why the dashboard showed no data for logged-in users. Turned out convex/auth.config.ts is required for getUserIdentity() to work — without it, all auth calls silently return null. No error, no warning. Just null.

Agent stats get credited to the wrong agent. When Sid (squad lead) merges a PR and calls /api/tasks/complete, the stat credit was going to Sid — not Cody who did the actual work. Cody had 13 "done" tasks. Sid had 89. We fixed this by always crediting task.assignedAgentId rather than whoever called the complete endpoint.

OpenClaw updates can break your config. A couple of times after an OpenClaw update, the config got corrupted and Telegram communication with the squad lead stopped working entirely. The fix: launch Claude Code directly in the OpenClaw config directory and let it diagnose and repair the issue. Claude can read the config files, spot what changed, and fix it — faster than digging through changelogs manually. Worth keeping in mind if your squad lead goes quiet after an update.

Never embed secrets in cron messages. The setup guide originally told users to paste their API key directly into the openclaw cron add message. That stores the key in plaintext. We fixed this — secrets go in env vars, cron messages stay clean.

Agent concurrency has a hard limit. Our OpenClaw instance runs on a $5/month VPS with 4 GB RAM. At one point, more than 5 Cody agents were spawned in parallel — the server buckled, and we had to add swap space just to recover. After that we set agentConcurrency to 2, which keeps the VPS stable and performance consistent. The lesson: more parallelism isn't always better. Two focused agents finishing tasks cleanly beats five agents thrashing each other. If you're running OpenClaw on a Mac Mini or a beefier server, you can comfortably push concurrency higher — the agentConcurrency setting is there for exactly that reason.

The Meta Part

There's something strange about building a tool for AI dev teams using an AI dev team to build it.

Every PR in the Squad Control repo from #20 onwards was created by an agent — that's over 120 PRs at time of writing.

As of March 3, 2026: PRs created by agents: 120+ · Tasks completed: 150+ · Merge rate on first review: ~80%

Hawk reviewed most of them. A significant portion of the refactors, UX fixes, and backend plumbing — that was Cody. The billing integration, the admin panel, the Docker provisioning scripts — agent work, reviewed by Hawk, merged by Sid.

More than a third of Cursor's own PRs are now agent-created. We've been living in that world for a few months. As they put it, "the human role shifts from guiding each line of code to defining the problem and setting review criteria." That matches our experience exactly. The humans on this project set direction, made architectural decisions, approved things visually, and handled anything that required taste or judgment. The agents handled the execution.

It worked better than expected. It also broke in ways we didn't expect. Both things are true.

The most surprising thing: the bottleneck wasn't the agents. It was task definition. The clearer and more specific a task description, the better the output. Vague tasks produce vague PRs. Specific tasks with acceptance criteria produce mergeable code on the first try. Writing good tasks is a skill — and it turns out it's a more valuable skill than writing the code yourself.

Bad task: "Add dark mode."

Good task: "Implement a ThemeProvider in Next.js, add a toggle to the navbar, persist the user's preference to their Convex profile on click, and ensure it respects the system theme on first load. No layout shift. Snapshot test updated."

The second version ships on the first try. The first one generates a PR you'll rewrite yourself.

What Squad Control Is

Cursor sees the agent fleet future from inside the IDE. Squad Control is the layer above — the control plane that coordinates the fleet, regardless of what the agents are building. You define agents with names, roles, and persona prompts; connect your repo; install the OpenClaw skill; and set a cron job running. From there, agents pick up tasks, open PRs, and ship — while you stay in the role that matters: setting direction and exercising judgment. The same orchestration model works beyond dev teams too: content pipelines, research, customer support, any knowledge work where you want agents handling execution while humans handle the decisions that require taste.

We're still learning what it means to manage a team that doesn't sleep and never gets bored of refactoring. If you want to see how your workflow changes when you stop writing code and start defining outcomes, come build with us.

Start free at squadcontrol.ai — no credit card required.

Ready to build your AI dev team?

Squad Control is free to start. No credit card required.

Get started free →